Ukraine Built an Automated Turret That Shoots Fiber-Optic Drones on the Front

The AI-powered ballistic computer does all the work of targeting

This article is one of three weekly exclusive articles for my paid subscribers. Thank you for continuing to fund independent military analysis with a heavy dose of pro-Ukrainian sentiment and a side of anti-authoritarian humor.

The blurry footage is not dramatic in the way you’d expect. There’s the normal royalty-free techno music customary of videos coming out of Eastern Ukraine, along with some targeting of Russian junk.

But once you understand what you’re watching, it gets considerably more exciting: a gun turret that sees a drone before the drone sees it, calculates where the drone will be when the bullets arrive, and then asks a human operator for permission to end the conversation.

The operator obliges.

The drone ceases to exist.

That sequence: detect, calculate, confirm, fire is the story. Not the blurry bang.

What the Video Is Actually Showing

First, here’s the video in question:

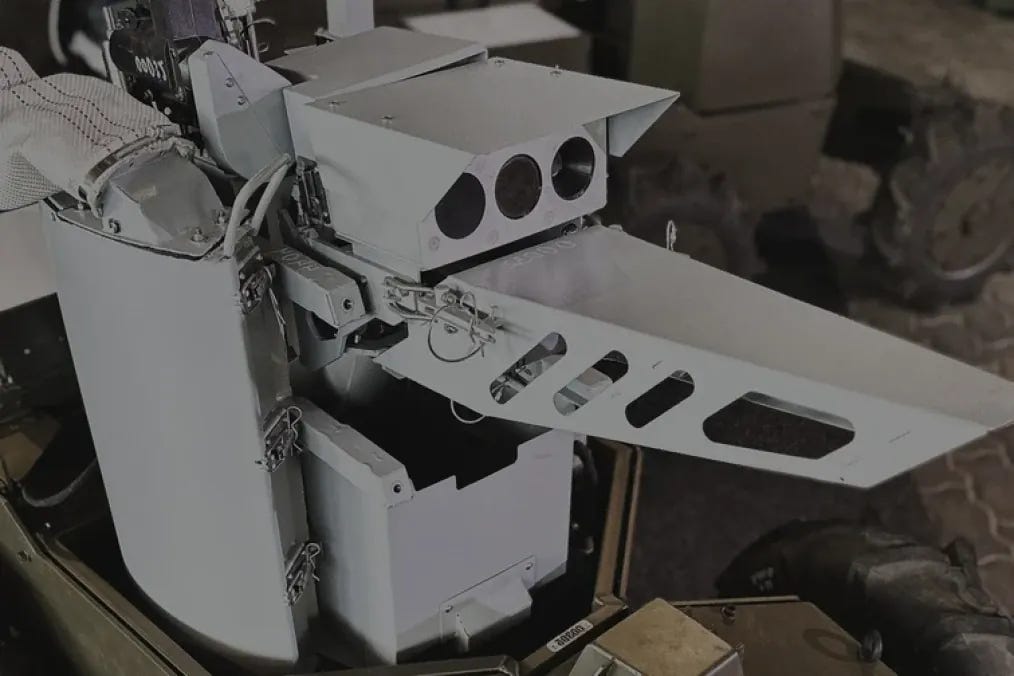

The system in the footage is the Ukrainian “Khyzhak,” which translates to “Predator,” developed by UGV Robotics through Ukraine’s Brave1 defense innovation ecosystem.

If you’ve followed Ukrainian military technology coverage at all over the past eighteen months, you may have seen the name.

What you probably haven’t seen until now is this: the thing working in what appears to be an actual combat engagement, against the target type it was explicitly designed to kill.

The target type is the fiber-optic FPV drone.

Russian forces were among the first to use fiber-optic FPV drones in the Ukraine war, with battlefield testing around spring 2024. It was one of their rare accomplishments for iterating on an existing problem set: How to get around Ukrainian electronic warfare.

It spools out a thin optical fiber behind it as it flies, using that wire as the control channel instead of a radio link. The only defense that works against a fiber-optic FPV is kinetic: you have to physically intercept it. Or cut the wire with some scissors lol

That is what the Khyzhak is designed to do. Hit it, that is.

The Khyzhak is built around a 7.62mm machine gun; UGV Robotics has specified PKT, M240, or FN MAG variants depending on what’s available.

The turret carries 700 rounds and is reported to fire in extremely short bursts, typically one to two or three to four rounds per engagement. The theoretical intercept count from a single ammo load exceeds 100 drones. Several news outlets have repeated this claim.

The theoretical part of that claim deserves some skepticism, which I’ll return to shortly. But the gun itself is almost incidental to the engineering story here.

The hard part is the sensor package, and specifically, what the sensor package is being asked to do.

The Khyzhak mounts two thermal imaging cameras.

The first is a wide-angle sensor with a 48-degree field of view: the detection layer, the thing scanning the environment for anything that doesn’t look like background noise.

The second is a 15-degree narrow-angle camera for precision aiming, used once the system has decided something is worth shooting at.

Between those two cameras, a laser rangefinder, and a ballistic computer, the turret is performing a firing-solution calculation that would have required a dedicated crew of specialists with manual instruments a generation ago.

When an FPV drone is approaching at speed, the engagement window is measured in seconds. A human gunner on a pintle mount can see the drone, swing the barrel toward it, and fire. That’s achievable.

What a human gunner can’t reliably do is calculate, in real time, where the drone will be when the bullets cross its flight path, factoring in range, angular velocity, wind, platform movement, and the projectile’s own time-of-flight.

Actually, some highly experienced or gifted human gunners can do this. The brain is constantly performing calculations that we’re not aware of. But the Khyzhak’s ballistic computer is doing that math for the rest of us. The thermal sensors are tracking the drone’s trajectory continuously, feeding that track data into the fire control system, and the system is outputting a firing solution: the precise point in space where the bullets need to arrive to intercept the drone.

The system is doing what a radar-guided anti-aircraft gun does, but miniaturized, AI-driven, and built for the specific geometry of small drones at ranges up to 800 meters.

It doesn’t decide to fire on its own. The engagement model places a human operator at the terminal step: the system detects, tracks, and calculates, then presents a targeting solution and waits. The operator confirms engagement with a single command. One button, one decision, one drone.

This is sometimes dismissed as a technicality or a PR hedge because the West gets squeamish when you discuss machines making the kill decision. But we’re not talking about human targets here. Personally, I have no problem with letting the machine off the leash to kill other machines.

Regardless, it’s likely a small technical matter to remove the human; the machine is already doing most of the work short of actually releasing rounds downrange.